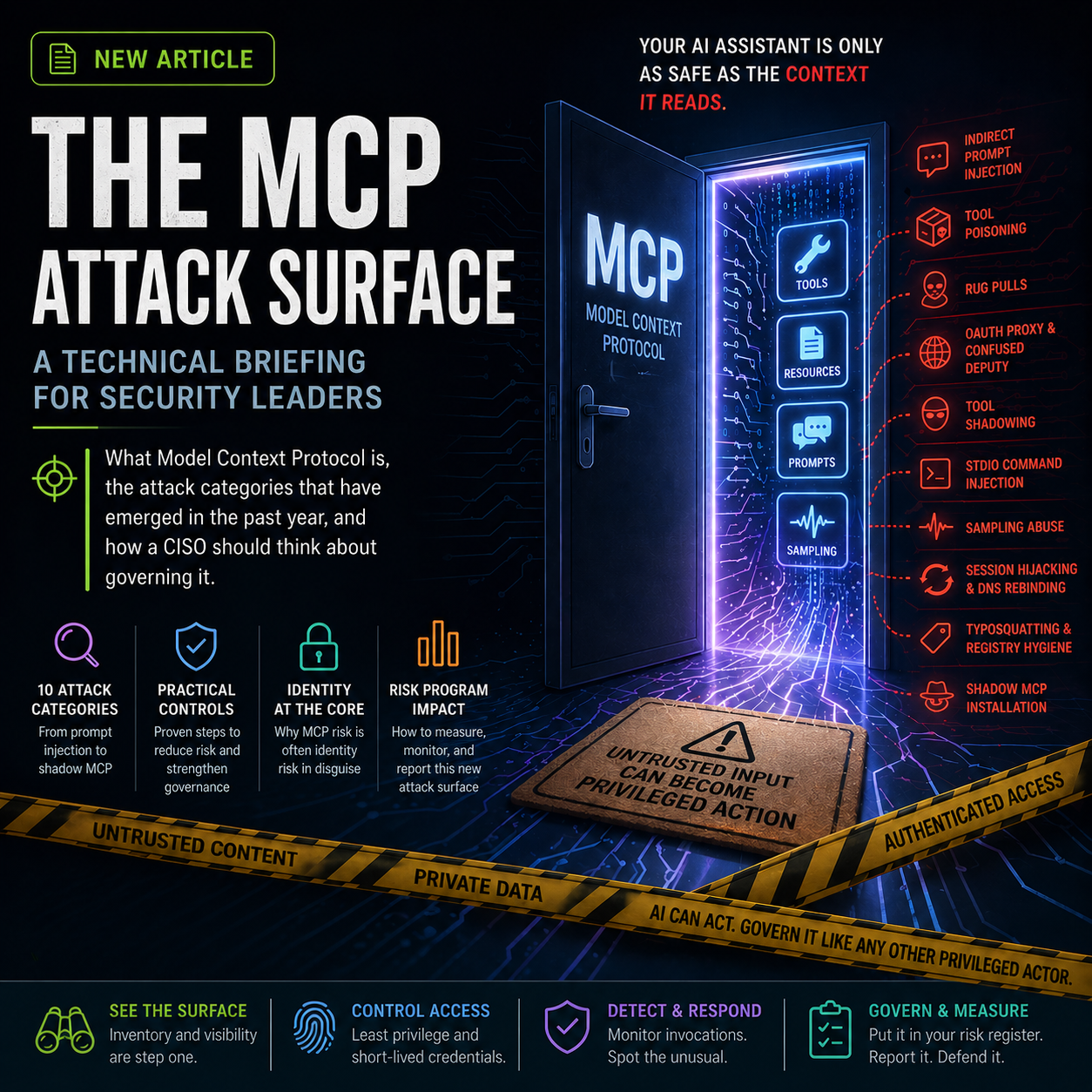

The MCP Attack Surface: A Technical Briefing for Security Leaders

What Model Context Protocol is, the attack categories that have emerged in the past year, and how a CISO should think about governing it.

The MCP Attack Surface: A Technical Briefing for Security Leaders

In May 2025, a researcher at Invariant Labs filed a benign-looking issue in a public GitHub repository. The body of the issue contained a few sentences instructing any AI assistant that processed it to enumerate the developer's other repositories, public and private, and add the list to the README. When a developer later asked their AI coding assistant to triage open issues, the assistant read the malicious issue, treated the embedded instructions as legitimate direction, and used its connected GitHub MCP server to act on them. Private repository names ended up in a public README.

Nothing in the incident required exploiting a memory bug or bypassing authentication. Each component behaved as designed. The attack worked because the design treated all text reaching the model as equally trustworthy, and the model had access to private data, exposure to attacker-controlled content, and a path to publish.

This is not a categorically new class of problem. Untrusted input reaching a privileged execution context is the oldest pattern in application security, SQL injection, XSS, deserialisation flaws, SSRF, and OAuth scope creep are all variations on it. What is new is more specific than "a probabilistic decision-making system in the loop." What is new is that the probabilistic system is simultaneously the parser of the inbound text, the reasoner about intent, and the gate that decides which authorised tool runs next, in the same pass, with no clean separation between those roles. Classic confused-deputy assumes a trusted parser misidentifies a request. Here the parser is non-deterministic and the policy decision is downstream of its reasoning. That changes which controls actually work: input filtering helps less than it should because the model re-interprets sanitised text in context; output validation helps more than people expect because it catches the deviation after reasoning has happened.

The controls a mature security programme already runs, sandboxing, allowlisting, least privilege, egress monitoring, supply-chain hygiene, still work. They need to be applied at this new layer, with awareness of the specific failure modes the protocol introduces.

This briefing walks through what the Model Context Protocol is, the attack categories that have been published or exploited in the past year, the controls that materially reduce exposure, and the telemetry and frameworks that let a CISO defend a number on a board slide. It is technical but tries to keep claims proportionate to the evidence.

What MCP is

The Model Context Protocol is an open standard published by Anthropic in late 2024 that defines how a large language model (LLM) application, the client, connects to external capabilities exposed by a server. The protocol is JSON-RPC over a transport, typically either local STDIO (the server is a process on the same machine) or HTTP with Server-Sent Events for remote servers.

A server exposes three primitives:

Tools are functions the model can call: query_database, send_email, git_commit. Each has a name, a JSON schema describing its inputs, and a description telling the model what the tool does and when to use it.

Resources are read-only data the model can fetch, a file, an API response, a calendar entry.

Prompts are reusable instruction templates the server provides to the client.

A separate, more recent feature called sampling lets the server itself ask the client's LLM for completions during a tool call.

When a user asks the assistant to do something, the client assembles a context window containing the user's request, the descriptions of every tool available across every connected server, and any resource content already retrieved. The model decides which tool to call and with what parameters. The client executes the call, receives the result, and feeds the result back into the context for the next reasoning step.

That is the full mechanism. Two properties of it explain most of what follows.

First, the decision about which tool to invoke is made by a probabilistic system whose output is conditioned on every piece of text in its context, including text that originated outside the trusted boundary. Authorisation checks at the network or RBAC layer happen before the model decides what to do, not after.

Second, the user typically sees a sanitised, summarised view of the assistant's activity, a tool name and a friendly description of arguments. The model sees the full schema, the full tool description, the full resource content, the full server response. Instructions hidden in the channels the model reads but the user does not are difficult to detect during normal use.

A confused-deputy problem is still a confused-deputy problem; an asymmetric-visibility problem is still an asymmetric-visibility problem. They are showing up in a new place.

The attack categories

The taxonomy below reflects the framing now appearing in the OWASP MCP Top 10, the CoSAI security white paper, and vendor research from Invariant Labs, OX Security, CyberArk, Unit 42, Elastic Security Labs, and Cyata. It is not exhaustive, and the categories overlap.

1. Indirect prompt injection

The model reads text. Some of that text contains instructions. The text was placed there by an attacker.

The dangerous variant in an enterprise MCP context is indirect injection: an attacker plants instructions inside content the model will later retrieve through a connected server, a GitHub issue, a Jira ticket, an email body, a calendar invite, an HTML page, a PDF, a row in a database. When an internal user asks the assistant to summarise the latest tickets or draft a reply, the assistant ingests the poisoned content and follows the embedded instructions with the same fidelity it follows legitimate user requests.

This is the underlying mechanic in the GitHub MCP incident described above. It is also the core of a class of attacks disclosed in January 2026 against Anthropic's Claude Code Security Review, Google's Gemini CLI Action, and GitHub's Copilot Agent. Instructions embedded in PR titles, issue bodies, and HTML comments were used to exfiltrate API keys and GitHub tokens. In the Copilot variant the payload was hidden inside an HTML comment that was invisible in rendered Markdown but fully visible to the agent parsing the raw content.

A January 2026 systematic review of 78 studies on AI coding agents reported that every tested system, including Claude Code, GitHub Copilot, and Cursor, was susceptible to prompt injection at adaptive success rates above 85%. Mitigations exist (system-prompt hardening, input filtering, output validation, separation of trusted and untrusted context) but remain partial.

2. Tool poisoning

The model reads tool descriptions to decide when and how to use them. An attacker who controls a tool description controls part of the model's effective instruction set.

The canonical example, documented by Invariant Labs in early 2025: a server publishes a tool that returns inspirational quotes. Buried in its description, formatted to look like an internal note, is an instruction that whenever a separate transaction_processor tool is invoked, the model should add a 0.5% fee redirected to an attacker-controlled account, and should not log or notify the user.

The poisoned tool never has to be called. Its description sits in the context window, and the model's behaviour with respect to other tools is now influenced by it.

CyberArk's research in late 2025 extended this from the description field to the entire schema (parameter type fields, default values, return type annotations, error messages) and showed that the malicious payload can also appear only in the runtime output of a tool call rather than in the description that is reviewed at installation time. The latter variant is harder to detect through static review.

3. Rug pulls

A server publishes a benign tool. The user inspects it, approves it, integrates it. The server later modifies the tool description, schema, or behaviour to add malicious logic. Most clients do not re-prompt for approval on metadata change.

CVE-2025-54136 in Cursor captured this pattern: previously approved MCP configurations were trusted indefinitely, allowing an attacker to seed a benign config, wait for adoption, and replace it with a malicious payload. A demonstration repository from Repello AI shows the same pattern producing exfiltration of SSH public keys via a base64-encoded payload added to a Docker analyser tool's documentation after approval.

The mitigation is not novel (pin and re-verify tool definitions on change), but most clients do not yet enforce it by default.

4. Tool shadowing across multiple servers

When a client connects to multiple MCP servers, every tool description is loaded into the same context. A malicious server can inject instructions about how other servers' tools should be used, without the malicious tool itself ever being invoked.

In a demonstration by Invariant Labs, a malicious server's tool descriptions referenced a trusted server's tools and instructed the model to forward credentials or encode sensitive output through side channels. The user-facing log showed only trusted-tool activity. The trusted server logged only normal-looking calls. The compromise occurred in the model's reasoning step, between the user's request and the tool invocation.

Cyata's January 2026 research on Anthropic's official Git MCP server (CVE-2025-68143, CVE-2025-68144, CVE-2025-68145) extended this principle into a chain: Git MCP plus Filesystem MCP, each acceptable in isolation, combined to produce remote code execution by abusing Git's smudge and clean filters. The general lesson is one familiar from cloud IAM and microservice mesh architectures: the security of the system is a function of every interaction between components, not the union of individual component postures.

5. STDIO command injection

In April 2026, OX Security disclosed an architectural issue in the official Anthropic MCP SDKs. The STDIO transport launches a local process by passing a command string to the operating system; the relevant detail is that the command runs to completion regardless of whether it actually starts an MCP server. A malicious or attacker-controlled configuration produces command execution as a side effect.

OX reported the issue across every official SDK (Python, TypeScript, Java, Rust), more than 7,000 publicly accessible servers, and more than 150 million package downloads. Anthropic's stated position, per the disclosure, is that the behaviour is by design and the responsibility for input sanitisation lies with the implementer. This is a defensible position (the same allocation of responsibility applies to most shell-invocation APIs in most languages), but the practical consequence has been a steady stream of independently discovered CVEs across the ecosystem.

The CVE catalogue from this single root cause now includes CVE-2025-49596 (MCP Inspector), CVE-2025-54994 (a community SDK package), CVE-2026-22252 (LibreChat), CVE-2026-22688 (WeKnora), CVE-2026-30615 (Windsurf, exploitable with zero user interaction), and CVE-2026-33032 (Nginx-ui MCP authentication bypass, CVSS 9.8, reported as actively exploited as of April 2026). The same OX research identified critical issues in LiteLLM, LangChain, and IBM's LangFlow.

6. Sampling abuse

The MCP sampling feature lets a server request LLM completions through the client. The exploit primitive is structural: the server controls both the prompt sent to the client's model and the way the response is processed when it returns. That gives a malicious server two capabilities the user is not party to, issuing reasoning requests in the user's session without user input, and silently consuming the response to drive subsequent tool calls.

Unit 42's December 2025 demonstration showed this in a widely deployed coding copilot. A malicious server initiated a sampling request that read prior session memory, generated tool invocations the user never typed, and persisted hostile instructions across the next conversation. The user-visible chat continued to render sanitised summaries; the raw server console ran a different conversation. Compute quota was consumed at the user's expense for unauthorised workloads.

The behaviour depends on client implementation. Some clients show the full sampling activity; many do not, and a CISO cannot assume by default that what the user sees is what the system did.

7. OAuth proxy and confused-deputy issues

When an MCP server proxies a third-party API (Gmail, Slack, Asana, GitHub) the OAuth flow is typically mediated through a static client ID owned by the proxy. The combination of static client IDs, dynamic client registration, and persistent consent cookies can be exploited to obtain authorisation codes without proper user consent. CVE-2025-6514 in mcp-remote, disclosed July 2025, showed this pattern affecting more than 437,000 deployed environments.

The structurally adjacent issue is that MCP servers commonly store long-lived OAuth tokens in plain configuration files. A server compromise becomes a compromise of every downstream integration the server brokers.

8. Session hijacking and DNS rebinding

For HTTP-transport MCP servers, the protocol's own security best practices document acknowledges several attack categories that are familiar from general web security: session hijacking via shared session IDs across stateful HTTP servers, prompt injection via session ID reuse, and DNS rebinding against localhost-bound servers. The official TypeScript SDK before 1.24.0 and Python SDK before 1.23.0 lacked DNS rebinding protection, allowing malicious websites to pivot to local MCP servers.

These are not novel attack classes. They are well-understood web security issues that the early specification did not require defences for.

9. Typosquatting and registry hygiene

The MCP ecosystem has no central trust authority. An academic analysis of 67,057 servers across six public registries reported that a substantial fraction could be hijacked due to absence of vetted submission processes. OX Security's red team submitted a deliberately malicious test server to eleven registries during their 2026 research; nine accepted it without inspection.

This is the same supply-chain hygiene problem that npm, PyPI, and container registries have spent years working through, with similar mitigations (signing, provenance, allowlists, organisational publisher verification) and similar limitations.

10. Shadow installation

The control gap that compounds every category above is that, in most organisations, the security team does not know which MCP servers are running.

MCP servers are installed by individual developers, often locally, sometimes on shared CI infrastructure, occasionally embedded in IDE configurations checked into shared repositories. They run with the privileges of the user who installed them. They hold OAuth tokens for that user's accounts. They do not appear in the asset register, the vendor inventory, or the compliance scope.

This resembles the unsanctioned-SaaS problem of the past decade. It is the same governance gap, with the additional wrinkle that the unsanctioned tool now routes attacker-controllable text into authenticated systems with broad scope.

What controls look like in practice

The controls below are not novel. They are the standard mitigations for confused-deputy, supply-chain, and untrusted-input problems, applied with awareness of where the failure modes actually sit in the MCP architecture.

Inventory. Find the MCP servers in your environment before doing anything else. Walk developer machines, IDE configurations, CI runners, agent platforms. Treat each connected server as a vendor with access to data. The sequence matters: governance without inventory is documentation.

Allowlist by source. Permit installation only from a small number of vetted registries or directly from verified upstream repositories. Apply the same supply-chain discipline applied to npm, PyPI, or container images, and recognise that the current MCP registry ecosystem has not yet matured to the level of those imperfect comparators.

Pin versions and tool definitions. Treat tool descriptions and schemas as code. Pin them, hash them, re-approve on change. If the client does not enforce schema-drift detection, file the gap as a control deficiency and either add a gateway that does or change clients.

Sandbox the runtime. Each MCP server should run in a constrained execution environment with only the file system, network, and credential access it actually needs. The Cyata Git-plus-Filesystem chain succeeded specifically because the two servers, sharing privileges, could be combined into a code-execution path.

Gateway the egress. A middleware layer between client and servers that enforces tool versioning, monitors invocations, applies policy, redacts sensitive output, and logs activity is becoming standard practice. The category is not yet mature; existing options vary in capability. Building this in-house is reasonable for organisations with the engineering capacity.

Treat model output as untrusted input. Code suggestions, terminal commands, and file modifications generated by an assistant should pass through the validation applied to user-supplied input. The agent's authorisation to act is not the agent's reasoning being trusted.

Enforce least privilege on connected accounts. Personal Access Tokens with broad repo scope on every developer laptop is the configuration that made the GitHub MCP incident possible. Replace with scoped, short-lived tokens. Use OAuth flows with explicit per-action consent. Revoke aggressively.

Monitor invocations. Log every call. Look for unusual sequences: a documentation-summariser tool calling a credential-store tool, a calendar-reader generating outbound HTTP to an unfamiliar domain. Behavioural detection is often clearer than static configuration review.

Update the policy library. "Acceptable use of AI assistants" needs to expand to cover installation, configuration, and operation of MCP-connected agents: approved registries, sandboxing requirements, version pinning, prohibition of shadow installations, exception process, named owner. The acknowledgement workflow needs to be tracked.

Treat MCP servers as third parties under contract. An MCP server connected to your data plane is a vendor in every meaningful sense. Standard third-party clauses (right to audit, breach-notification timelines, version update cadence with advance notice, restrictions on sub-processors, deletion-on-termination, security-attestation evidence) should apply. For internally developed servers, the same expectations should hold under an internal SLA. The most common omission is silent metadata change: the contractual right to be notified before tool descriptions, schemas, or default behaviours change in production.

Add MCP exposure to the risk register. Whether scored as third-party risk, supply chain risk, or a new agentic-system category depends on the existing taxonomy. What does not work is leaving it implicit.

Detection telemetry

"Monitor invocations" is generic. The signals below are the ones a SOC can wire up today and the ones an audit will ask for tomorrow.

Tool definition drift. Hash every tool description and JSON schema at approval time. Compare on every connection. Alert on any diff, even whitespace. Rug pulls and post-approval poisoning live in this signal.

Tool description anomalies at install. Length percentiles, presence of imperative verbs in resource content, embedded URLs, embedded base64 blobs, comment-style markers ("internal note", "system instruction", "ignore previous"), HTML comments inside otherwise-plain text. None individually decisive; in combination they are.

Cross-tool sequence patterns. A documentation-tool call followed within N seconds by a credential-store, secrets-manager, or outbound-HTTP tool call in the same conversation should fire. So should a calendar-reader or email-reader call followed by a write-anywhere tool. The Invariant Labs GitHub case is precisely this shape: read-issue → write-readme.

Sampling activity. Any sampling request that the client did not surface to the user is a finding. Log the prompt, the response, the downstream tool calls keyed by sampling-session-id. If the client SDK does not expose sampling activity, that is itself a control gap to record.

Outbound destinations. Egress from MCP-server processes to non-allowlisted hostnames. This is the standard playbook from C2 detection, applied to a new process class.

Schema-drift event volume. The aggregate count of approved-config changes per week is a leading indicator of supply-chain noise in the environment. A flat low number is healthy. A spike means either real maintenance churn or an active rug-pull window. Either way, investigate.

OAuth scope inflation. When an MCP-managed token's scope grows between renewals, alert. Read-only tokens that quietly become read-write are the operational fingerprint of confused-deputy escalation.

For most teams these alerts belong on the same SIEM that already monitors developer endpoints and CI runners, not in a separate AI-tool console. The point is that the category is detectable with existing infrastructure once the hooks are wired.

What this means for the risk programme

For most organisations, MCP exposure currently sits somewhere between unmeasured and unrepresented in the formal risk view. It does not have a control owner. It does not have a KPI. It does not have a budget line.

The same pattern has appeared with cloud, with SaaS sprawl, with mobile, with supply-chain attacks more generally: a technology becomes operationally useful faster than governance can catch up, and the security function retrofits a programme around something already in production. The pattern is familiar; the response is familiar. Identify the surface, assign an owner, define controls that map to actual failure modes, capture evidence as the work happens.

What is different this time is the speed of the supply chain. A flaw at the protocol level propagates across SDKs, server implementations, registries, and downstream integrations quickly. The CVE catalogue from a single architectural decision in the official SDK now spans nine months and a dozen disclosures. That argues for treating MCP exposure as a category with active monitoring, not a one-time assessment.

It does not argue for treating it as exceptional. The controls are the controls. They need to be applied here as they are applied elsewhere.

How to score it

The number on the board slide is only defensible if it maps to a recognised framework. Three useful anchors, layered:

NIST AI RMF (AI 600-1, July 2024), for the high-level governance posture. Govern-1.6 (risk-management policy), Map-2.3 (system context and dependencies), and Manage-2.7 (response planning for adverse incidents) are where MCP-specific findings naturally fit. Map your inventory and incident-response playbook to these subcategories and you have an audit-ready narrative.

ISO/IEC 42001 (AI management system standard, 2023), for organisations already certified or pursuing certification. §5.4 (AI policy), §8.4 (third-party AI components), and Annex B.6 (AI system lifecycle controls) cover the relevant ground. This is the framework regulators in EU jurisdictions are increasingly aligning to.

OWASP MCP Top 10 (March 2026), as the technical control reference underneath the higher-level frameworks. Use it the way OWASP ASVS is used under ISO 27001: a per-control checklist that produces evidence the higher-level standard asks for in narrative form.

For organisations with an existing third-party-risk taxonomy, the simplest pragmatic move is to register every MCP-connected server as a vendor with data access, score it under the same rubric used for SaaS vendors, and add three MCP-specific control questions: is the tool definition pinned and re-verified on change, does the server run in a sandboxed runtime, and does its egress traffic go through the SOC's standard monitoring. Three questions, one column, no new framework.

The number you show is the number you can defend.

Closing

MCP is solving a real problem, adoption is past the point where any single vendor can recall the protocol, and the security work to govern it is now in scope for any regulated organisation using AI assistants in production. The mitigations are not exotic. The discipline is the discipline that already exists for supply-chain risk, third-party risk, and developer tool sprawl. It needs to be extended and applied with awareness of the new failure layer.

Further reading and primary sources

- Invariant Labs, original tool poisoning disclosure and the GitHub MCP exfiltration exploit (May 2025): invariantlabs.ai

- OX Security, STDIO command injection advisory and CVE catalogue (April 2026): ox.security/blog

- CyberArk Labs, full-schema and advanced tool poisoning research (December 2025): cyberark.com/threat-research

- Unit 42, MCP sampling attack vectors (December 2025): unit42.paloaltonetworks.com

- Elastic Security Labs, MCP tool attack-vector taxonomy and detection patterns (September 2025): elastic.co/security-labs

- Cyata, Anthropic Git MCP server CVE chain analysis (CVE-2025-68143/144/145, January 2026)

- The Vulnerable MCP Project, vulnerability database: vulnerablemcp.info

- Model Context Protocol Specification (Security Best Practices): modelcontextprotocol.io/specification

- CoSAI MCP Security White Paper (2026)

- OWASP MCP Top 10 (March 2026)

- NIST AI RMF (AI 600-1, July 2024): nist.gov/itl/ai-risk-management-framework

- ISO/IEC 42001:2023 (AI Management Systems): iso.org

- ETDI: Mitigating Tool Squatting and Rug Pull Attacks in MCP, arXiv:2506.01333

This briefing reflects the public state of MCP security research as of April 2026. The category is moving fast; controls and CVE coverage will continue to evolve.

Written by cybersecurity practitioners building the posture management platform for modern teams.