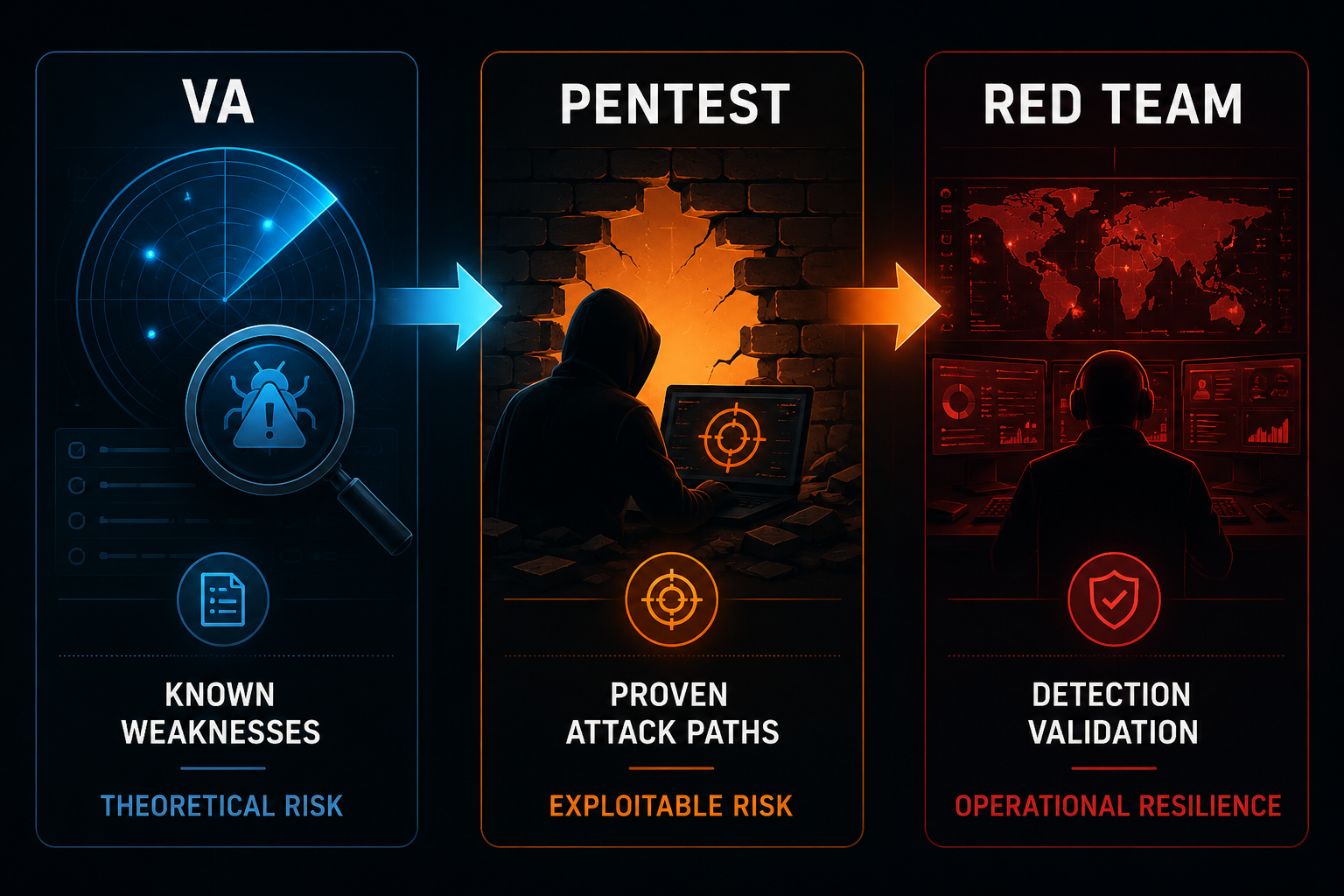

Vulnerability Assessment, Penetration Test, Red Team: Three Different Things, Three Different Invoices

VA, pentest, and red team are not synonyms. A CISO's guide to what each one actually delivers, when you need each, and how vendors blur the lines.

If you have ever sat in a procurement meeting where a vendor pitches a "comprehensive red team penetration test with full vulnerability coverage," congratulations. You have just been quoted on three different services bundled into one price tag. Two of them are probably not what you actually need, and one of them is almost certainly not what is being delivered.

This is one of the most consistently muddled topics in offensive security, and the confusion is not accidental. The boundaries are real, the goals are different, and the deliverables look nothing alike when you actually read the report. Yet every year, security teams pay pentest prices for vulnerability scans, expect red team outcomes from pentests, and get neither.

Here is what each of these is, what it is not, and how to tell them apart before you sign the SOW.

TL;DR (read this if nothing else)

| Question | Vulnerability Assessment | Penetration Test | Red Team |

|---|---|---|---|

| What is the goal? | Find known weaknesses | Prove exploitability | Test detection and response |

| What is the output? | A list of findings | A narrative of how an attacker got in | A narrative of how an attacker stayed in |

| Who runs it? | Mostly tools | Humans with tools | Humans emulating a specific adversary |

| How long does it run? | Hours to days | One to four weeks | Four to twelve weeks |

| Are blue team / SOC informed? | Yes | Yes | No (that is the point) |

| Does scope include people, physical, OSINT? | No | Sometimes | Yes |

| What primarily measures success? | Coverage of known CVEs | Number and depth of valid attack paths | Detections triggered (or not) |

| Typical maturity prerequisite | None | Patching, basic IAM | Mature SOC and IR program |

| Frameworks | NIST SP 800-115, CVSS | OWASP, PTES, OSSTMM | MITRE ATT&CK, TIBER-EU, CBEST |

If you do not have a SOC capable of detecting and responding to a real attack, a red team will tell you that, expensively. If you have never patched anything, a pentest will tell you that, expensively. Sequence matters.

Vulnerability Assessment: the inventory of known weaknesses

A vulnerability assessment (VA) is, in its purest form, an automated discovery exercise. You point a tool at a network, an application, or a cloud account, and the tool produces a list of every known issue it can identify against the asset. Known issues means CVEs in the public databases (NVD, MITRE), misconfigurations against benchmarks (CIS, STIG), exposed services, weak ciphers, missing patches.

The defining trait is that nothing is exploited. The tool fingerprints software versions, parses banners, queries APIs, and matches what it finds against a signature database. If a server is running Apache 2.4.49 and a CVE database says that version has a path traversal vulnerability, the VA reports it. The tool does not actually try to traverse the path. It assumes the CVE applies if the version matches.

That assumption is where VAs both succeed and fail.

A concrete failure mode: Log4Shell that is not Log4Shell

Take CVE-2021-44228. A scanner finds log4j-core-2.14.1.jar on the classpath of an internal service and reports a critical. The finding is technically accurate: that JAR is vulnerable. But in this specific deployment:

- The library is shaded into a third-party dependency that never invokes any of the affected log methods.

- The service runs behind an authenticated API gateway on a private VPC.

- The JVM was started with

-Dlog4j2.formatMsgNoLookups=true, which disables the JNDI lookup feature the exploit depends on.

Three independent layers of unreachability, and the scanner sees none of them. It sees a version string. It cross-references a database. It writes "Critical, CVSS 10.0." The remediation team spends a week chasing it, escalates to engineering, gets pulled into a war room, and eventually discovers the actual path of exploitation does not exist in this deployment.

The same scanner, on a different host in the same estate, might flag the identical JAR on a service that is internet-facing, invokes the vulnerable code path, and did not set the JVM flag. That one is a real critical. The scanner cannot tell you which is which. It tells you both are critical, and you find out the difference by reading the code, exploiting it, or getting breached.

This is the gap a VA cannot close. Coverage is high; signal-to-noise is not.

Where VAs win

- Coverage. A scanner can look at ten thousand IPs in a night. No human can.

- Repeatability. Run it weekly, monthly, after every deploy. Compare deltas.

- Cost per finding. Once you have the licence, the marginal cost approaches zero.

- Compliance. SOC 2, ISO 27001, PCI DSS all expect regular scanning, often with specific cadence.

Where VAs fail

- False positives, as in the Log4Shell example above. Triaging a long VA report eats days, and the answer is often "this is unreachable."

- False negatives. Custom application logic, business logic flaws, broken authentication, IDOR, SSRF in proprietary endpoints: scanners do not find these. They are not in the signature database because every application is different.

- No exploitability proof. "CVE-2024-XXXX is present" tells you nothing about whether it is reachable from your threat model.

A VA report is a starting line, not a finish. If your only offensive-security work is a quarterly Nessus or Qualys run, you have inventoried your problems. You have not tested whether they are actual problems.

You need a VA when

- You need a defensible answer to "do you scan?" on a security questionnaire.

- You want continuous coverage between deeper engagements.

- You are building a vulnerability management program and need a baseline.

- You manage a large estate (thousands of hosts) and need triage signal.

Frameworks and standards

- NIST SP 800-115 (Technical Guide to Information Security Testing and Assessment)

- CVSS for scoring

- CIS Benchmarks for misconfiguration baseline

- OWASP ASVS for application coverage targets

Penetration Test: the proof of exploitability

A penetration test moves from "this looks vulnerable" to "I exploited it, here is the impact." The single most useful definition: a pentest is a time-boxed, scope-bounded attempt to chain real weaknesses into demonstrable business impact, performed by humans.

The deliverable is not a list. It is a narrative. A real pentest report tells you:

- The tester started here (recon, scope entry point, given credentials).

- They found this specific weakness by doing X.

- They combined it with this other thing to get here.

- From there, they reached this critical asset, or this customer's data, or this admin role.

- To stop this, fix Y. To detect this in future, log Z.

Note what is missing: there is no claim of completeness. A pentest does not say "your system is secure." It says "in this many days, with this scope, here is what we got. What you do not see in this report is either out of scope, out of time, or genuinely missed."

Pentest types matter, and they are not interchangeable.

By scope

- External: from the public internet, no credentials. Tests perimeter and exposure.

- Internal: assumed compromised laptop or VPN access. Tests lateral movement.

- Web application: scoped to a single app or set of apps. Tests OWASP Top 10, business logic, auth/session, IDOR, SSRF, etc.

- Mobile: iOS or Android client plus its backend.

- API: REST, GraphQL, gRPC. Heavy on auth, rate limiting, mass assignment, BOLA.

- Cloud configuration: IAM permissions, exposed buckets, lateral paths in AWS / GCP / Azure.

- Wireless: rogue AP, WPA enterprise weaknesses, captive portal bypass.

- Social engineering: phishing, vishing, pretexting (when scoped in).

By visibility

- Black box: tester has no info beyond the target name.

- Grey box: tester gets some context (architecture diagram, low-priv credentials).

- White box (a.k.a. crystal box): tester gets source code, infrastructure access, design docs.

White box gets you the most coverage per dollar. Black box gets you the most realistic external-attacker simulation. Grey box is the middle path most engagements use.

Where pentests win

- They prove or disprove specific findings. The report has receipts.

- They surface business logic flaws no scanner finds.

- They are auditor-recognised. SOC 2 Type II, PCI DSS, HIPAA, NIS 2 (in EU), all expect or require pentests at defined intervals.

- They train your team. A good pentester debriefs on how to detect what they did.

Where pentests fail

- Time-boxed. A skilled tester with two weeks finds different things than a real attacker with twelve months.

- Scope-bounded. If the in-scope assets do not include the actual crown jewel, the test misses the real risk.

- Hard to compare across vendors. Two reputable firms can produce wildly different reports for the same target. Methodology, tester skill, and scope interpretation all swing the outcome.

- Tester quality varies enormously. The same SOW can be filled by an OSCP-grade specialist or by someone running automated scans and writing them up nicely. Procurement rarely catches the difference.

You need a pentest when

- A standard requires it (SOC 2 Type II annually, PCI quarterly external + annual internal, NIS 2 risk-based).

- You shipped a major change to authentication, authorisation, or data flow, and you want a human to look at it.

- A customer asks for one as part of vendor due diligence.

- You suspect a class of vulnerability (e.g. you just discovered IDOR in one place; you want a sweep).

Frameworks and methodologies

- PTES (Penetration Testing Execution Standard)

- OWASP Web Security Testing Guide (WSTG) and OWASP ASVS

- OSSTMM (more academic, less commonly applied)

- NIST SP 800-115 (overlaps with VA standards)

- For cloud: MITRE Cloud Matrix, Scout Suite findings as a baseline

Red Team: the test of detection and response

A red team engagement is not a pentest with a fancier name. It tests something fundamentally different. A pentest answers "is this exploitable?" A red team answers "would we have caught it?"

The unit of value is detection, not exploitation. A red team that compromised the environment but generated zero alerts is more useful than a red team that smashed through but tripped every sensor on the way. The first one tells you your monitoring is blind. The second one tells you your monitoring works.

To make this real, red team engagements typically share these traits:

- Long duration. Weeks to months. Real attackers do not work in two-week sprints, and your detections need to hold up over time.

- Quiet operations. The team picks tooling and tradecraft that emulate a specific threat actor (e.g. a financially motivated criminal group or a specific APT). They route through their own infrastructure. They sleep when defenders sleep.

- Multi-vector. Phishing, credential reuse, OSINT, occasionally physical access (badge cloning, tailgating, social engineering at reception). Whatever a real adversary would actually do.

- Goal-oriented. Defined objectives like "exfiltrate data from the customer billing system" or "achieve domain admin and persist for thirty days undetected." Not a list of vulnerabilities to find.

- Blue team blind. Only the CISO, a small steering committee, and possibly the legal team know it is happening. The SOC, IR team, and IT staff are not informed. That is the entire test.

A red team report does not look like a pentest report. It is structured around the kill chain or, more commonly today, MITRE ATT&CK.

A typical red team finding reads:

"On day 9 we sent a spear phishing email to 14 finance staff using a pretext from open-source profiles of the CFO's calendar. Three users entered credentials on a typo-squatted domain. We accessed Microsoft 365 with one set, located a Power Automate flow with embedded service account credentials, and pivoted to an internal Jira instance. From Jira, we obtained a Slack token in a public channel and accessed the engineering Slack. Detection summary: zero of these 11 actions generated an alert. Two would have been detectable with existing logging if a rule were configured. Six are not currently logged at all."

The bolded line is the entire point.

Where red teams win

- They stress-test the parts of your security program that you cannot test any other way. Your SOC's actual response speed. Your IR runbook on a Saturday at 11pm. Your phishing-aware culture under specific pretexts.

- They produce assumed-breach drills as a byproduct. After-action reviews train your team in ways that no tabletop exercise can match.

- They are the closest thing to a real adversary engagement you can buy.

Where red teams fail

- They are expensive. Often five to fifteen times the cost of a comparable scope pentest, and that is before the social engineering and physical legs.

- They are wasted on immature programs. If your patching is broken and you have no SOC, a red team will tell you the building is on fire. You already knew. You needed a pentest to map the fires, not a red team to confirm the smoke.

- They require executive sponsorship. Things break. People get phished. Tickets get filed. The CEO needs to know what is happening before the General Counsel gets called in.

- They are easy to mis-scope. "Find anything you can in three weeks" is not a red team. It is just an unstructured pentest.

You need a red team when

- You have a mature SOC with documented detection rules and you want to test them under realistic adversary tradecraft.

- You are subject to TIBER-EU (financial sector in EU), CBEST (UK financial), or equivalent regulator-driven threat-intelligence-led testing programs.

- You are about to claim a security maturity level publicly and want an honest, adversarial check before you do.

- Your last several pentests came back clean and you want to know whether that reflects your environment or your testers' methodology.

Frameworks and standards

- MITRE ATT&CK (the lingua franca of red team reporting)

- TIBER-EU (Threat Intelligence-Based Ethical Red-teaming)

- CBEST (Bank of England)

- iCAST (Hong Kong)

- The Red Team Field Manual and its successors (reference, not a standard)

Why people confuse these three

Five reasons. They compound.

1. Vendors deliberately blur the labels. "Red team penetration test" is a common offering. It sounds like the highest-tier service. In practice it is usually a pentest with two phishing emails added. Vendors that sell mostly pentests have an incentive to rebrand them upmarket. Buyers that have never seen a real red team report cannot tell the difference until they see one side by side.

2. Buyers ask for the wrong thing because they have heard the word. A board member reads about a breach attributed to APT29, asks "are we red teaming?" and the CISO buys what is on offer to satisfy the question. The result is a pentest charged at red team rates, delivering pentest value and answering the wrong question.

3. Auditors do not always distinguish. SOC 2 Type II requires "penetration testing" performed annually. The auditor does not specify methodology. Some firms read "penetration testing" and run a vulnerability scan with a tester writing it up as a report. The auditor accepts. The CISO thinks they have a pentest. They do not.

4. Cost-cutting compresses scope. A real red team is six figures. A real external pentest of a single web application is mid five figures. Buyers under pressure cut scope, then call the result by the original name. A two-day external scan plus a phishing email is now sold as a "red team."

5. The terms have legitimate overlap. Red teams use pentest techniques. Pentests use VA tooling. The procedural lines blur even when the strategic intent is different. People who do this for a living can usually tell the difference within five minutes of reading the report. People who buy it once a year often cannot.

The cost-of-confusion test is simple. Read the report's table of contents. If the document is organised around a list of CVEs and CVSS scores, you have a vulnerability assessment. If it is organised around a series of attack paths with proof-of-impact screenshots, you have a pentest. If it is organised around MITRE ATT&CK techniques with a "did our blue team detect this?" column, you have a red team. If it is organised around all three loosely, you probably have an expensive vulnerability assessment with a creative cover page.

How to sequence them

Mature programs run all three at different cadences. Immature programs should not.

If you have not patched in six months: start with a vulnerability assessment. Get the asset inventory and the patching cadence right. A pentest will just confirm what the scanner already told you, at twenty times the cost.

If you have a working patching program but no human has ever tested your authentication logic: schedule a pentest. Scope it to the highest-impact application or the most exposed segment. Get a senior tester. Read the report carefully and remediate before scheduling the next one.

If your SOC has documented detection rules, your IR runbook is rehearsed, and your last two pentests came back with mostly informational findings: consider a red team. Scope it to a specific objective tied to your real threat model. Brief one executive sponsor and the legal team. Tell nobody else.

If you operate in regulated finance, energy, or critical infrastructure in the EU: you may be obligated to run a TIBER-EU style engagement regardless of maturity. In that case, the red team is the point of compliance, not the optimisation.

A useful rough cadence for a mid-stage SaaS company:

- Vulnerability assessment: continuous, with full reports reviewed monthly

- External pentest: annually

- Web app pentest: per major release (or annually if releases are small)

- Internal / cloud pentest: annually

- Red team: every two to three years once SOC is mature

These are starting points. Threat model, regulator, and customer demand can shift any of them.

What to ask before signing the SOW

Three questions. Ask them in this order. The answers tell you what you are actually buying.

-

"What is the unit of success in this engagement?" A VA vendor will say coverage or finding count. A pentest vendor will say impact demonstrated or attack paths proven. A red team vendor will say objectives achieved or detections evaded. If they say "comprehensive coverage of the attack surface," they are selling a VA in a pentest jacket.

-

"Will the SOC and IR team be informed before the engagement?" A pentest will be coordinated with operations to avoid false alerts and outages. A red team will not. If the vendor says "of course we coordinate with your team," you are not buying a red team, regardless of the title.

-

"Show me a redacted sample report from a previous engagement of the same type." Read it. The structure and language tell you exactly what they deliver. If the sample looks like a Nessus export with extra commentary, do not pay pentest rates.

A fourth question, optional: "Who specifically will be on the engagement, and can I see their previous five reports?" Tester quality is the single largest variable in this market. Junior consultants doing a red team will produce worse output than senior pentesters doing a pentest. Names matter.

What this looks like for a real CISO program

The ideal end state is a security program where:

- Vulnerability data flows continuously from automated scanning into a single risk register.

- Pentest findings get triaged into the same register, with severity adjusted for proven exploitability rather than theoretical CVSS.

- Red team findings drive detection-engineering work and update the SOC's detection coverage map.

- Each layer informs the next. The VA tells you what is theoretically broken. The pentest tells you what is actually exploitable. The red team tells you what your monitoring would actually catch.

Most programs do none of this. They treat each engagement as a standalone deliverable, file the PDF, and move on. The VA report contradicts the pentest report contradicts the red team report and nobody reconciles them. The risk register has duplicates and gaps. The CISO ends up answering audit questions from three different documents that do not agree.

The bridge is a system that treats risks, controls, evidence, and objectives as one connected loop, where a VA finding about a missing patch and a pentest finding about an exploited Jira plugin and a red team finding about a missed alert are all the same record at different fidelity levels. Without that, you are running three programs that pretend to be one.

That, incidentally, is what we built Aertous to do. But that is a conversation for another post.

Written by cybersecurity practitioners building the posture management platform for modern teams.